Exploring VMware Backup Options: Enhancing Data Protection with Catalogic DPX

In the virtualization world, VMware is one of the key players, offering a robust platform for managing virtual machines (VMs) across various settings. Given the importance of the data and applications housed within these VMs, having a solid backup plan is not just advisable—it’s essential. This note will highlight the array of available VMware backup options, highlighting their distinct features and advantages. We’ll also examine how Catalogic DPX steps in to refine and elevate these backup strategies.

Best VMware Backup Options for Data Protection

The spectrum of VMware backup options presents a variety of mechanisms, each with its own set of advantages tailored to maintain data integrity, reduce downtime, and enable rapid recovery in the face of disruptions. Understanding these options is key to developing a robust backup strategy that protects data and aligns with the organization’s operational goals.

Snapshot-Based Backups

Snapshot-based backups in VMware are akin to taking a point-in-time photograph of a VM, which includes its current state and data. This method is quick and can be useful for temporary rollback purposes, such as before applying patches or updates. However, snapshots are not full backups; they depend on the existing VM files and can lead to performance degradation over time if not managed properly. Snapshots should be part of a broader backup strategy, as they do not protect against VM file corruption or loss.

Agent-Based Backups

Agent-based backups involve installing backup software within the guest operating system of each VM. This method allows for fine-grained control over the backup process and can accommodate specific application requirements. However, it introduces additional overhead, as each VM requires its own backup agent configuration and consumes resources during the backup process. This approach can be resource-intensive and may not scale well in environments with a large number of VMs.

Agentless Backups

Agentless backups offer a more streamlined approach by interacting directly with the VMware hypervisor to backup VMs without installing agents within them. This reduces the resource footprint on VMs and simplifies management. Agentless backups use VMware’s APIs to ensure a consistent state capture of VMs, which is crucial for applications that require a consistent backup state, such as databases.

Incremental and Differential Backups

Incremental backups capture only the changes made since the last backup, while differential backups capture all changes since the last full backup. Both methods are designed to optimize storage usage and reduce backup time by not copying unchanged data. They require an initial full backup and are particularly useful for environments where data changes are relatively infrequent.

Cloud-Based and Off-Site Backups

Cloud-based backups involve storing VM backups in a cloud storage service, providing scalability, flexibility, and off-site data protection. This approach is essential for disaster recovery, as it ensures geographic redundancy. Cloud-based backups can be automated and managed through VMware’s native tools or third-party solutions, ensuring secure and efficient off-site data storage.

Integrating Catalogic DPX in VMware Backup Strategies

Catalogic DPX is a standout data protection solution that seamlessly integrates with VMware environments, supporting both agent-based and agentless backups. It offers a flexible deployment according to the specific needs of the VMware infrastructure.

Key features of Catalogic DPX include:

- Application-Aware Backups: A crucial backup tool for consistent backups of applications running within VMware VMs, especially important for databases and transactional systems.

- Block-Level Incremental Backups: A best VMware backup practice that minimizes storage requirements and accelerates the backup process by capturing only block-level changes.

- Instant Recovery: A key feature for disaster recovery, enabling rapid recovery of VMware VMs directly from backup storage, minimizing downtime.

- Global Deduplication: An efficient data protection solution that reduces storage consumption across all backups by eliminating redundant data.

Catalogic DPX enhances VMware backup strategies by providing a comprehensive, efficient, and scalable backup solution. Its integration with VMware’s APIs and support for both physical and virtual environments make it a versatile backup tool for ensuring data integrity and availability.

Use Catalogic DPX with VMware for Flexible and Reliable Backups

Selecting the ideal VMware backup solution must be customized to the distinct needs of your virtual environment, taking into account recovery goals, storage needs, and the intricacies of operation. By integrating Catalogic DPX into your VMware backup and disaster recovery plan, you enhance your data protection strategy. Catalogic DPX’s cutting-edge features ensure efficient and dependable backups, along with rapid restoration.

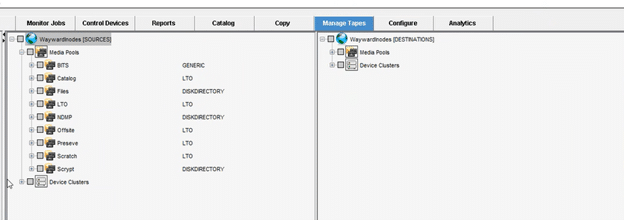

The tape migration process can also be helpful for moving media types of type DISKDIRECTORY

The tape migration process can also be helpful for moving media types of type DISKDIRECTORY