Cost-Effective Data Protection: IT Manager’s Proven Recipe to Maximize Savings

As an IT manager, you’re constantly walking a tightrope between ensuring robust data protection and managing tight budgets. It’s no secret that investing in new hardware can be costly, and often, organizations feel the pinch when forced to purchase the latest and greatest equipment just to keep up with growing data protection needs. But what if there was a way to improve your data protection strategy without breaking the bank? What if you could leverage the hardware you already have, extending its life and maximizing your investment? That’s exactly what this guide aims to help you do – build Cost-Effective Data Protection.

The Reality of Data Protection Costs

Let’s face it—data protection isn’t optional. With cyber threats on the rise and regulations like SOX (Sarbanes-Oxley Act), GDPR, and HIPAA demanding stricter data controls, organizations are under more pressure than ever to ensure their data is safe, secure, and recoverable. However, the costs associated with achieving this can be daunting. New hardware purchases, particularly for storage and backup, can be a significant burden on IT budgets.

According to a survey by ESG (Enterprise Strategy Group), many organizations report that hardware costs account for a substantial portion of their IT spending, especially in areas related to data protection and storage. This is where the idea of repurposing existing hardware comes into play. By leveraging what you already have, you can reduce the need for new investments while still meeting your data protection goals.

The Case for Leveraging Existing Infrastructure

Before diving into the how-tos, it’s worth discussing why repurposing existing hardware is worth the effort. First and foremost, it’s cost-effective. Instead of allocating a chunk of your budget to new storage systems, you can extend the life of your current hardware, freeing up funds for other critical IT initiatives.

Additionally, repurposing existing infrastructure aligns with sustainability goals. By making the most of what you already have, you reduce e-waste and the environmental impact associated with producing and disposing of electronic equipment.

Finally, there’s the aspect of familiarity. Your IT team already knows the ins and outs of your current hardware, which means less time spent on training and a smoother implementation process when repurposing it for new data protection tasks.

Understanding Your Current Hardware Capabilities

The first step in leveraging existing hardware for data protection is to thoroughly assess what you have. This means taking stock of your current servers, storage devices, and network infrastructure to understand their capabilities and limitations. You need to consider the following aspects of your hardware:

- Evaluate Storage Capacity: Determine how much storage space is available and how it’s currently being used. Are there underutilized storage arrays that could be repurposed for backup? Are older devices still performing well enough to handle additional workloads?

- Assess Performance: Evaluate the performance of your existing hardware. While it might not be the latest model, it could still have plenty of life left in it for less demanding tasks like backup and archiving.

- Check for Compatibility: Ensure that your existing hardware is compatible with the data protection software you plan to use. This includes checking for the right interfaces, protocols, and firmware updates that might be necessary for seamless integration.

- Analyze Network Bandwidth: Consider the impact of adding backup tasks to your network. Ensure that your network can handle the additional traffic without degrading performance for other critical applications.

Catalogic DPX: A Cost-Effective Data Protection Solution for Repurposing Hardware

We’ve developed Catalogic DPX for long enough to understand hardware evolution. This extensive experience has allowed us to design DPX to integrate seamlessly with a wide variety of existing hardware setups, making it an ideal choice for organizations looking to repurpose their infrastructure. Whether you’re working with older servers, storage arrays, or tape libraries, DPX allows you to extend the life of your hardware by transforming it into a robust data protection platform.

Key Features of DPX That Support Existing Hardware

Catalogic DPX offers several key features that enable organizations to leverage their existing hardware effectively for data protection:

- Software-Defined Storage: One of the standout features of DPX is its software-defined storage capability with vStor. This allows you to utilize your existing storage hardware – whether it’s direct-attached storage (DAS), network-attached storage (NAS), or storage area network (SAN)—to create a flexible, scalable backup solution. By decoupling the software from the hardware, Catalogic vStor enables you to maximize the use of your current infrastructure without needing to invest in new storage.

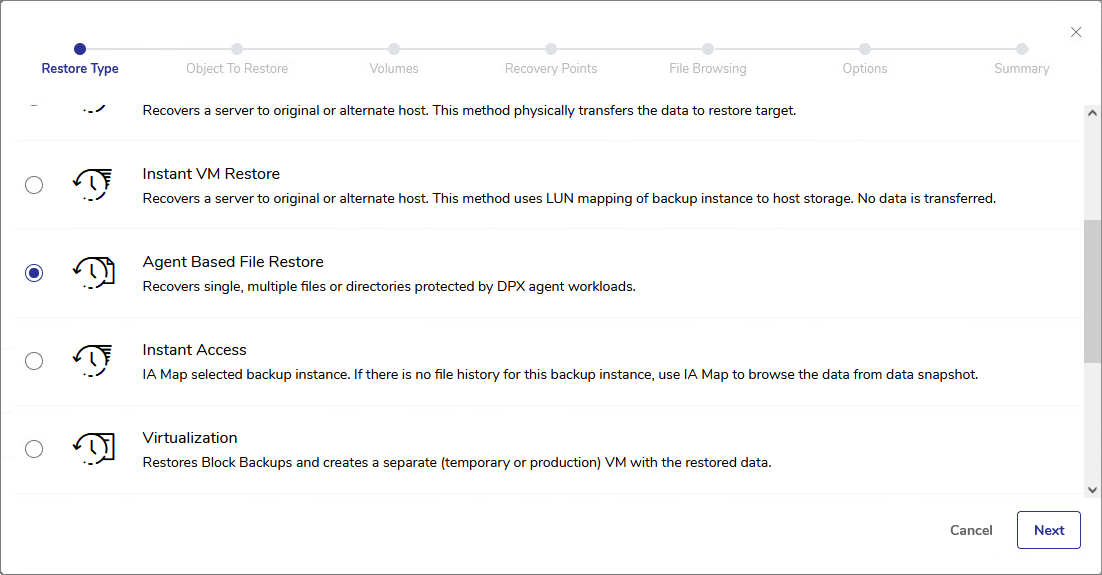

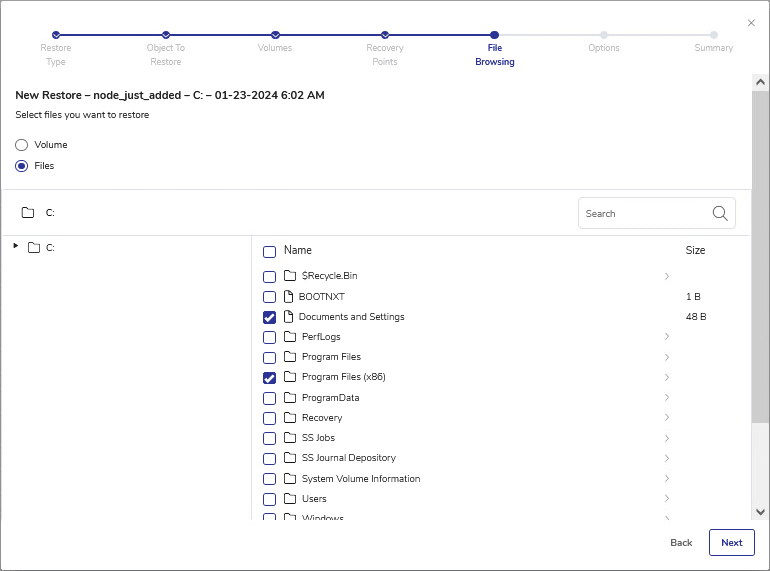

- Agentless Backup for Virtual Environments: If your organization relies heavily on virtual machines, DPX’s agentless backup capabilities are a significant benefit. This feature reduces the load on your servers by eliminating the need for additional software agents on each VM. Instead, DPX interacts directly with the hypervisor, simplifying the backup process and allowing you to use existing hardware more efficiently.

- Integration with Existing Tape Libraries: For organizations that still rely on tape for long-term storage, DPX offers seamless integration with existing tape libraries. This is particularly valuable for industries with strict compliance requirements, such as those governed by SOX. By repurposing your tape infrastructure, you can continue to meet regulatory requirements without the need for new hardware investments.

- Flexibility with Storage Targets: DPX allows you to choose from a wide range of storage targets for your backups, including cloud, disk, and tape. This flexibility means you can optimize your storage strategy based on the hardware you already have rather than being forced to buy new equipment.

Implementing a Hardware Repurposing Strategy

Now that you have a sense of what’s possible, let’s talk about how to implement a strategy for repurposing your existing hardware for truly cost-effective data protection. Here are five key steps to consider:

- Plan and Prioritize: Start by identifying your organization’s most critical data protection needs. Is your top priority ensuring quick recovery times for your most important applications? Or is it about meeting long-term archiving requirements for compliance? Understanding your goals will help you prioritize which hardware to repurpose and how to configure it.

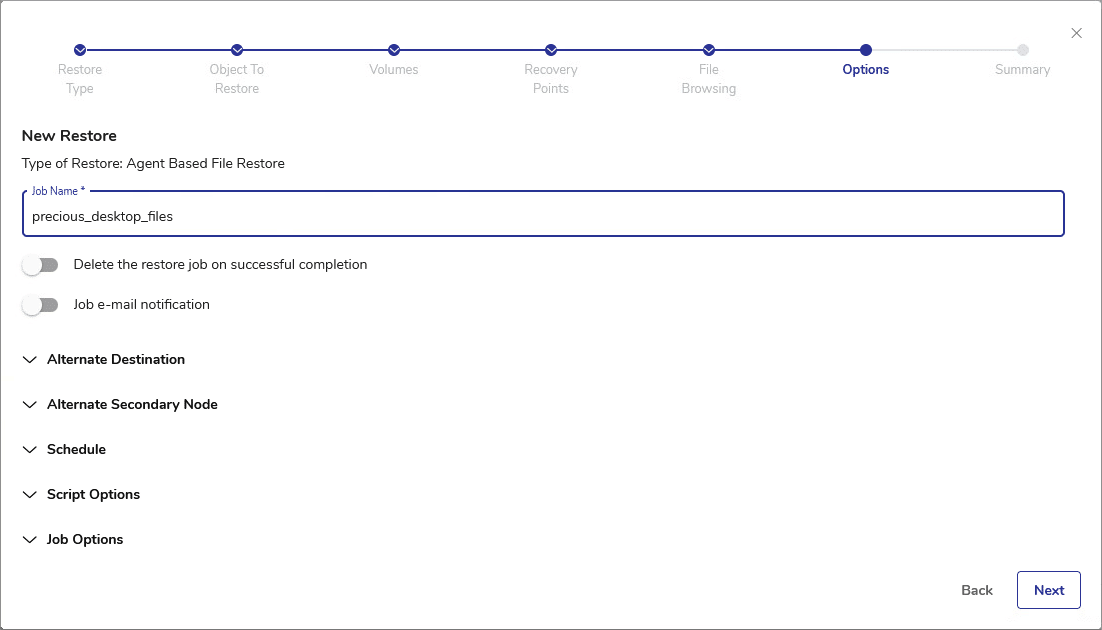

- Test and Validate: Before fully committing to repurposing your hardware, it’s crucial to test and validate the setup. This includes running backup and restore tests to ensure that your existing infrastructure can handle the new workloads without compromising performance. Make sure to document the results and adjust your configuration as needed.

- Optimize for Performance: While repurposing existing hardware can save money, it’s important to optimize your setup for performance. This might involve reconfiguring storage arrays, upgrading network components, or adjusting backup schedules to minimize the impact on your production environment.

- Ensure Compliance: As mentioned earlier, compliance with regulations like SOX, GDPR, and HIPAA is non-negotiable. When repurposing hardware, ensure that your data protection setup meets all relevant regulatory requirements. This might involve implementing immutability features to prevent unauthorized changes to backups, as well as ensuring that all data is encrypted both in transit and at rest.

- Monitor and Maintain: Once your repurposed hardware is up and running, it’s essential to monitor its performance and make adjustments as needed. Regularly check for firmware updates, monitor storage capacity, and keep an eye on network performance to ensure that your data protection strategy remains effective.

Examples of Similar Solutions

While Catalogic DPX offers a robust platform for repurposing existing hardware, it’s not the only option out there. Here are a few other solutions that allow you to leverage your current infrastructure for data protection. There are also aspects of licensing and costs, but that’s a different topic. Here are the other options to consider:

- Veeam Backup & Replication: Veeam offers a flexible backup solution that can integrate with existing hardware, including NAS, SAN, and even tape storage. Veeam’s scalability and support for a wide range of storage targets make it a popular choice for organizations looking to repurpose their infrastructure.

- Commvault Complete Backup & Recovery: Commvault provides a comprehensive data protection platform that supports a variety of storage options. Like DPX, Commvault allows organizations to use their existing hardware, including older storage arrays and tape libraries, to build a cost-effective backup solution.

- Veritas NetBackup: Veritas is known for its enterprise-grade data protection capabilities. NetBackup offers flexible deployment options that allow organizations to use their current storage infrastructure, including cloud, disk, and tape, to meet their data protection needs.

Meeting SOX and Other Regulatory Requirements

Let’s circle back to compliance for a moment. Regulations like SOX require organizations to maintain rigorous controls over their financial data, including ensuring the integrity and availability of backups. By repurposing existing hardware for data protection, you can meet these requirements in a cost-effective manner.

For example, SOX mandates that organizations maintain a reliable system for archiving and retrieving financial records. By leveraging existing tape libraries or storage arrays, you can ensure that your archived data remains secure and accessible without the need for new investments.

Similarly, GDPR requires that organizations protect personal data with appropriate security measures. By repurposing hardware for encrypted backups, you can comply with these regulations while maximizing the value of your existing infrastructure.

Making the Most of What You Have

In today’s budget-conscious IT environment, finding ways to do more with less is key to success. By repurposing existing hardware for data protection, you can reduce costs, extend the life of your infrastructure, and still meet the stringent requirements of modern data protection regulations.

Whether you’re using Catalogic DPX, Veeam, Commvault, or another solution, the principles are the same: assess your current hardware, optimize it for data protection tasks, and ensure compliance with relevant regulations. With a well-thought-out strategy, you can build a cost-effective data protection solution that leverages the investments you’ve already made, setting your organization up for long-term success.

For IT managers seeking to streamline their data protection strategy while leveraging existing hardware, Catalogic DPX offers a solution worth exploring. It combines simplicity, cost-effectiveness, and robust security features to help organizations make the most of their current infrastructure.